Introduction

Amazon Kinesis Video Streams (KVS) makes it easy to securely stream video from connected devices to AWS for analytics, machine learning (ML), playback, and other processing. KVS automatically provisions and elastically scales all the infrastructure needed to ingest streaming video data from millions of devices. It durably stores, encrypts, and indexes video data in your streams, and allows you to access your data through easy-to-use APIs. KVS enables you to play back video for live and on-demand viewing, and quickly build applications that take advantage of computer vision and video analytics. On May 4th, 2022, KVS announced new features that makes it easier to build scalable machine learning pipelines to analyze video data and deliver actionable insights to your customers. This blog post will walk you through the steps of setting up the required components that will enable you to convert your video data stored in KVS into image formats suitable for ML processing.

Background

Today, customers want to add ML capabilities to their video streams to solve their business challenges. These challenges range from detecting people or pets, to domain specific challenges such as identifying defects in production. A common requirement in this process is converting video into image formats that can be delivered to ML pipelines. Prior to the launch of the image generation feature, customers who wanted to analyze their video stored in KVS needed to build, operate, and scale compute resources to transcode video into image formats such as JPEG and PNG. AWS offers many services to enable customers to build this solution on their own, however, building it from scratch would require many weeks of effort that ultimately wouldn’t add any differentiation to your product.

KVS now solves this problem by offering three key features:

Managed delivery of images to Amazon S3

On Demand Image Generation API

Delivery of stream events to an Amazon Simple Notification Service queue

This blog post will focus on the Managed delivery of images to Amazon S3. Please refer to the API documentation page for more information about the other features available.

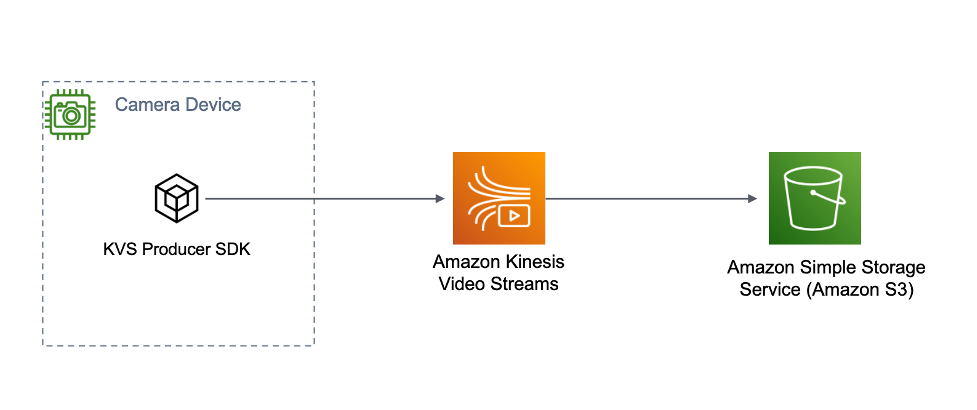

Solution Overview

Note: Implementing this solution will incur charges to your AWS bill. Please consult the pricing page for detailed information on pricing.

There are 3 components involved in building this solution:

The Amazon S3 Service

The Amazon S3 service stores the generated images along with metadata including timestamps and the associated KVS fragment ID. The fragment ID can be used to obtain the original video data used to generate the image.

KVS C++ Producer SDK

The KVS C++ Producer SDK is an open-source SDK available on Github. At a high-level, the purpose of the SDK is to segment video data into fragments and send the fragments to the KVS service where they are time indexed and stored. The Producer SDK provides a method named putEventMetadata that adds a tag to fragments. This tag is used to inform the KVS service to automatically generate images. The sample application provided by the SDK will invoke this method automatically to test the image generation features of the service. Note: KVS offers Producer SDKs in Java and C; these SDKs also offer this tagging method.

The KVS service

The KVS service time indexes, and stores the video for a customer-defined retention period. Each camera in KVS is represented as a unique “stream.” The image generation feature of the KVS service must be configured on a per-stream basis. This configuration includes the image output format (JPEG, PNG), sampling interval, image quality, and the destination Amazon S3 bucket.

The following steps will guide you through the setup of each component. Let’s get started!

Building the Solution

Create an Amazon S3 Bucket

The KVS image generation feature requires an Amazon S3 bucket to be specified as part of the image generation configuration. The Amazon S3 bucket must be created in the same AWS region that you will use the KVS service. The example below specifies a bucket in us-east-1

aws s3 mb s3://sample-bucket-name –region us-east-1

Checkout and compile the KVS C++ Producer SDK

Note: This blog post uses the Kinesis Video Streams C++ Producer SDK along with a Raspberry Pi to demonstrate image generation with a real device. It is possible to compile the sample applications included with the KVS C++ Producer SDK on Mac, Windows, and Linux. Please refer to the FAQ section of the readme on Github for instructions on building for these platforms.

This process was tested on a Raspberry Pi 3 Model B Plus Rev 1.3 using the Raspberry Pi Camera V2.1. The Pi was imaged with Raspberry Pi OS “bullseye” using the image 2022-01-28-raspios-bullseye-arhmf-lite.img. At the time of writing, it is required to enable Legacy Camera support in raspi-config in order for the sample applications to access the camera module.

1. Install the required dependencies

sudo apt-get install pkg-config cmake m4 git

sudo apt-get install libssl-dev libcurl4-openssl-dev liblog4cplus-dev libgstreamer1.0-dev libgstreamer-plugins-base1.0-dev gstreamer1.0-plugins-base-apps gstreamer1.0-plugins-bad gstreamer1.0-plugins-good gstreamer1.0-plugins-ugly gstreamer1.0-tools

2. Clone the KVS C++ Producer SDK version 3.3.0

git clone —branch v3.3.0 https://github.com/awslabs/amazon-kinesis-video-streams-producer-sdk-cpp.git

3. Create the build directory

mkdir -p amazon-kinesis-video-streams-producer-sdk-cpp/build

cd amazon-kinesis-video-streams-producer-sdk-cpp/build

4. Build the SDK and sample applications

cmake .. -DBUILD_GSTREAMER_PLUGIN=ON -DBUILD_DEPENDENCIES=OFF

make

Obtain IAM credentials for the sample application

After building the SDK, the binary kvs_gstreamer_sample should be in the build directory. This application requires IAM credentials in order to access the KVS APIs. The permissions for the credentials should allow the following actions:

“kinesisvideo:PutMedia”

“kinesisvideo:UpdateStream”

“kinesisvideo:GetDataEndpoint”

“kinesisvideo:UpdateDataRetention”

“kinesisvideo:DescribeStream”

“kinesisvideo:CreateStream”

“s3:PutObject”

After obtaining the permissions, you must export them prior to executing the kvs_gstreamer_sample. An example is provided in the following section:

export AWS_ACCESS_KEY_ID=<your access key id>

export AWS_SECRET_ACCESS_KEY=<your secret access key>

export AWS_DEFAULT_REGION=<your region>

Configuring the KVS Service

Configuring the KVS service to enable the image generation feature requires 3 steps.

1. Create a KVS stream in the desired AWS region.

aws kinesisvideo create-stream –stream-name <stream name>

–data-retention-in-hours 12 –region us-east-1

This example command creates a stream with a data retention of 12 hours. Data will automatically be deleted after 12 hours. The image generation feature requires data retention to be greater than 0. Currently, 1 hour is the minimum configurable retention period.

2. Edit the following JSON with the StreamName, DestinationRegion, and Amazon S3 Bucket name. For more information on the other configuration items, please consult the KVS documentation page. Save this configuration using the filename update-image-generation-input.json.

{

“StreamName”: “<stream name>”,

“ImageGenerationConfiguration”: {

“Status”: “ENABLED”,

“DestinationConfig”: {

“DestinationRegion”: “<region name>”,

“Uri”: “s3://<bucket name>”

},

“SamplingInterval”: 3000,

“ImageSelectorType”: “PRODUCER_TIMESTAMP”,

“Format”: “JPEG”,

“FormatConfig”: {

“JPEGQuality”: “80”

},

“WidthPixels”: 320,

“HeightPixels”: 240

}

}

3. Use the AWS CLI to configure the image generation feature in the KVS service. If you change the preceding json file, simply call this AWS CLI command again.

aws kinesisvideo update-image-generation-configuration

–cli-input-json file://update-image-generation-input.json

Generating Images

At this point you should have created an Amazon S3 bucket, compiled the KVS C++ Producer SDK sample application, obtained the appropriate credentials, exported them in your environment, and configured the KVS service to enable image generation.

Execute the sample and pass the name of the stream created in a preceding section as the first argument.

./kvs_gstreamer_sample <stream name>

Open the Amazon S3 console and you should see images being generated in your desired S3 bucket.

Conclusion

In this blog post, I demonstrated how to use the image generation feature of Kinesis Video Streams to build a pipeline you can use for machine learning. Now that Amazon S3 is storing your images, you can use Amazon S3 Event Notifications to trigger your own ML pipeline or trigger analysis by Amazon Rekognition. I encourage you to try out this new feature, and remember to delete the resources when you are finished. Happy building!

Leave a Reply

You must be logged in to post a comment.