In this two part series I will describe how to reduce latency of streaming media managed by Amazon Kinesis Video Streams and how less than 2-second latency can be delivered with robust video quality across a variety of network conditions. Lastly, I will provide a practical demonstration showing that with the Amazon Kinesis Video Stream Web Viewer, latency of 1.4 seconds is possible with Amazon Kinesis Video Streams (KVS).

In this, part 1, I cover the fundamentals of streaming media, KVS Operation, common design patterns and the leading contributing factors to latency in streaming media managed by KVS.

In part 2, I describe the techniques in which to configure KVS, the media producer and the media players for optimal latency settings. Finally, I introduce the Amazon Kinesis Video Stream Web Viewer and perform a number of experiments on KVS to validate the latency figures you can expect to achieve under a variety of settings and configurations.

Amazon Kinesis Video Streaming Media Format

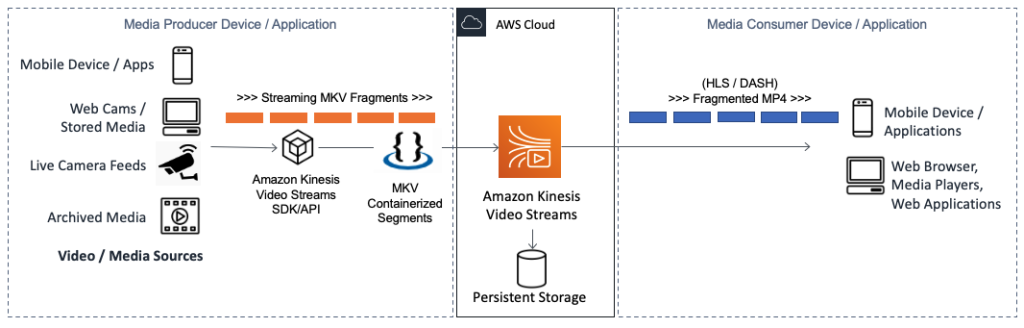

Media is ingested into KVS using the GStreamer KVSSink plugin, KVS Producer Libraries or the KVS API but in all cases, the underlying ingress media format is Matroska (MKV) containerized segments. On the consumer side, live media is most commonly exposed to web and mobile applications using HTTP Live Streaming (HLS) delivered as fragmented MP4. Both the producer and consumers in this common KVS design pattern rely on streaming media techniques. This blog focuses on how to reduce end to end latency of streaming media using using this common KVS design pattern.

Figure 1 – KVS Ingestion and storage of media presented as HLS to client applications.

Contributing Factors of Latency for Live Media on KVS

End to end latency of streaming media via KVS is the sum of video encoding/decoding, fragmentation delay, network latency and the KVS buffering and fragment buffering by the media player. To put a scale to these factors, the table below shows indicative latency values for a KVS media stream using parameters not well selected for live media resulting in a total latency of just less than 9.5 seconds.

Table 1 – Indicative latency values for a KVS stream with settings poorly selected for live media.

While the numbers presented here are representative, they do express a common start point for many new users of KVS and clearly show that to improve latency, we must consider video fragmentation and fragment buffering in more detail.

Streaming Media and Video Fragmentation

For reliable and timely transmission of live media over the Internet, groups of encoded video frames are containerized in one of a number of formats such as MPEG-4 (MP4), QuickTime Movie (MOV) or Matroska (MKV). As each group of frames is wrapped in a media container, it becomes a self-contained transmissible unit known as a fragment which forms the basis of what we refer to as streaming media. The time it takes for a frame to be recorded, transmitted, buffered and displayed is what the end user experiences as latency.

Figure 2 – H.264/265 encoded frames transmitted as streaming media fragments.

Video Encoding and Fragment Length

H.264 / H.265 video encoders achieve a high compression ratio by only transmitting pixels that have changed between frames. Each fragment must start with a key-frame (or I-Frame) which contains all data (pixels) and provides a reference for proceeding Predicted-Frames (P-Frames), and Bi-directional Frames (B-Frames). It’s enough to understand for this purpose that these frame types work together to describe the changing picture without transmitting unchanged data until the next key-frame is scheduled.

The number of frames between each key-frames is set by the encoder and is referred to as the key-frame interval. It’s common in streaming media and KVS in particular, to trigger a new fragment on the arrival of each key-frame and so fragment length (measured in seconds) can generally be calculated as:

Fragment Length (secs) = Key-Frame Interval (# of frames) / Frames Per Second (fps).

Figure 3 – H.264/265 frames types.

For example, a media stream with a key-frame interval of 30 and a frame rate of 15 fps will receive a key-frame every 2-seconds which with KVS video producers will generally result in 2-second fragment lengths. This is relevant as fragment length has a direct relationship to latency in streaming media.

Media Player Fragment Buffering

All media players will buffer some number of fragments to avoid degraded video quality during network instability. Media players include desktop players such as QuickTime and VLC, application libraries such as react-player used in custom web and mobile applications and the default media players found in the various web browsers.

Media player application libraries often expose the fragment buffer settings programmatically, whereas desktop and browser-based media players use preconfigured settings for live and on-demand video playback respectively.

Fragment buffering sits directly between the received media and the consumer and will contribute as much as 30 seconds latency if not specifically configured for live video. Fragment buffering by the media player is by far the leading contributor to latency for streaming media and can be calculated as shown in the following:

Fragment Buffer Latency (Secs) = Number of buffered fragments X Fragment length (secs).

Figure 4 – Media player buffer induced latency.

Therefore, to reduce latency; we must reduce the number of buffered fragments in the media player. Interestingly, most media player buffer settings are based on number of fragments, not total buffering time and so reducing the fragment length has a similar effect of reducing latency in streaming media.

HLS Playlist

HTTP Live Streaming (HLS) is an adaptive bitrate streaming protocol that exposes media fragments to media players via HLS Media Playlist (.m3u8) file. This is a clear-text manifest file that contains media configuration tags and URL links to the required media fragments. Below is an extract of HLS Media Playlist:

The #COMMAND fields are configuration tags. For example, the #EXT-X-PLAYLIST-TYPE:EVENT tag indicates that fragments will continue to be appended to the manifest for as long as the live media producer is active. When set, the media player will continually poll the Playlist for updated fragments until an #EXT-X-ENDLIST tag (the HLS End Event) is received.

The getMP4MediaFragment.mp4 fields are appended to the base URL to create complete links to individual media fragments in KVS.

KVS provides the means to request a HLS Media Playlist with a latency optimized configuration to support low latency media, even when users consume video on the device and media player of their choice.

Conclusion

In this, part 1 in the series on how to reduce end to end latency of media managed by Amazon Kinesis Video Streams, I discussed the fundamentals of streaming media. You also have learned about KVS operation, common design patterns and finally the leading contributing factors to latency of streaming media in KVS.

In part 2; I introduce the techniques in which to reduce latency of streaming media by reducing fragment lengths and media player buffer size. You will learn how to select optimal HLS playlist settings when requesting HLS URL from the KVS API for low latency media. You will also learn the recommended settings for robust video quality and latency optimized media streams and how testing using the Amazon KVS Web Viewer.

About the author

Dean Colcott is a Snr IoT and Robotics Specialist Solution Architect at Amazon Web Services (AWS) and a SME for Amazon Kinesis Video Streams. He has areas of depth in distributed applications and full stack development, video and media, video analytics and computer vision, Industrial IoT and Enterprise data platforms. Dean’s focus is to bring relevance, value and insight from IoT, Industrial data, video and data generating physical assets within the context of the Enterprise data strategy.

Leave a Reply

You must be logged in to post a comment.